Where design decisions actually live

I recreated iMessage in React Native and again in SwiftUI. One started from a Figma design system. The other started from Apple's documentation. The comparison changed how I think about where design decisions belong.

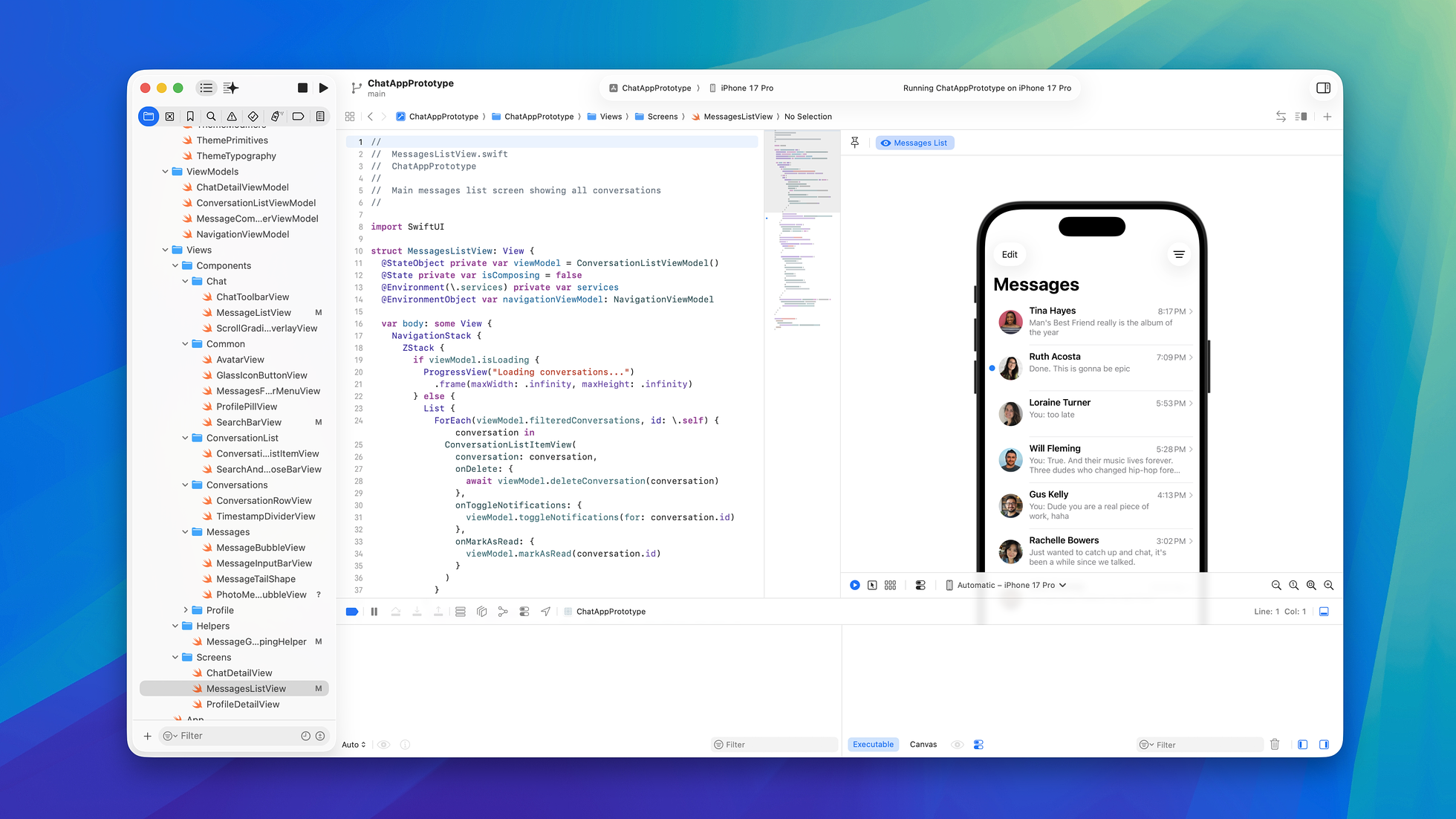

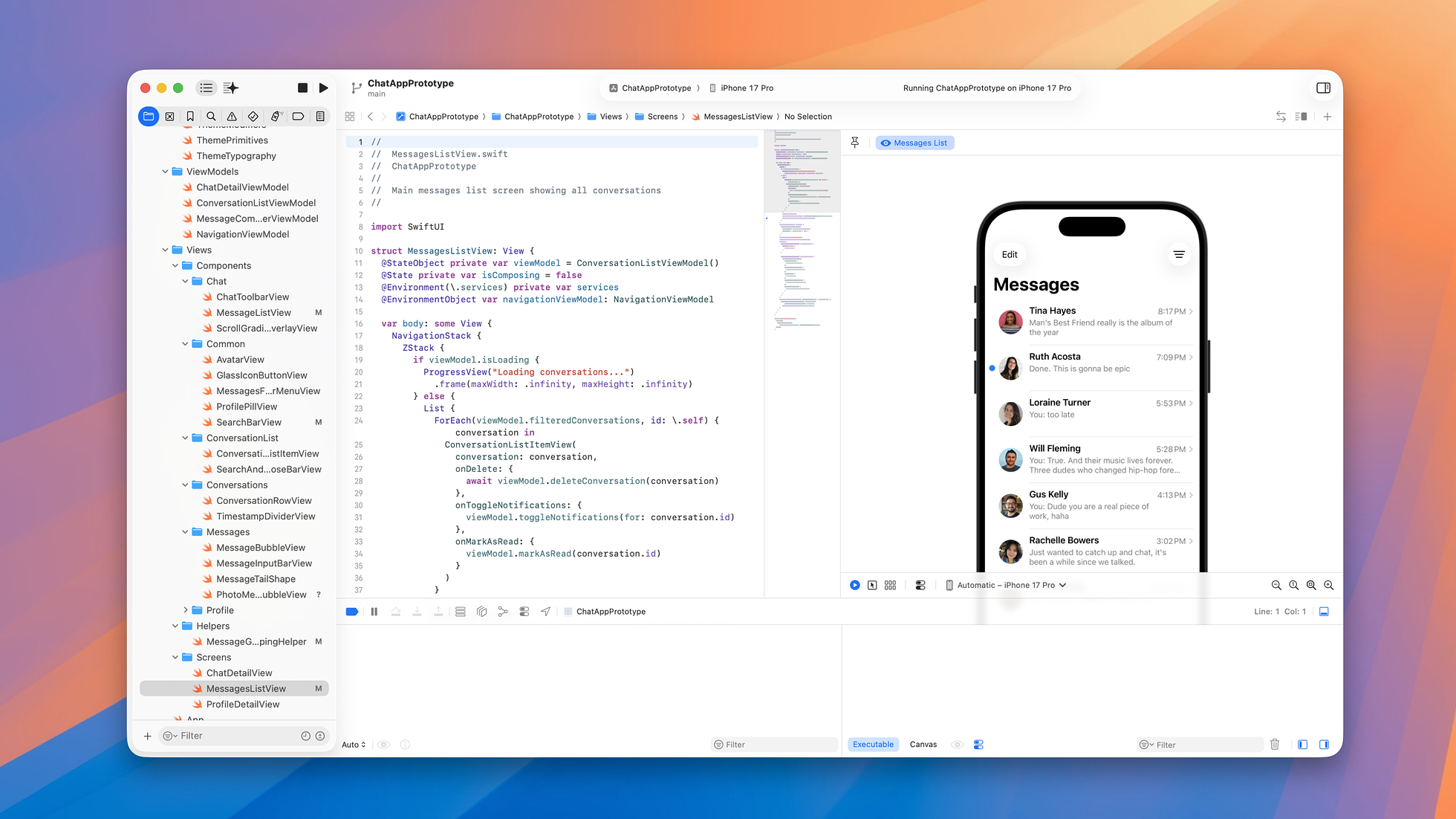

One app built above the platform, one built on it. SwiftUI with Liquid Glass on the left, React Native on the right.

I recreated iMessage twice. The first version started as a Figma design system, flowed through an MCP server into React Native, and became a prototype you can actually talk to. The second version I built natively in SwiftUI to learn Apple's Liquid Glass design language. I've been using iMessage as a test case whenever I want to learn a new platform: first to explore Figma's extensibility, now to understand what you lose when you design above the platform instead of on it.

From plugin to prototype

My previous essay on Figma plugins described the first two artifacts to come out of that work: an iMessage UI kit in Figma and a plugin that assembled AI-generated conversations into interactive prototypes on the canvas. When Figma released their Dev Mode MCP server in beta, I revisited the project. Claude Code could now read the UI kit directly and translate it into React Native components. While the plugin encoded domain knowledge into a prompt, the MCP server went a step further by encoding it into a protocol.

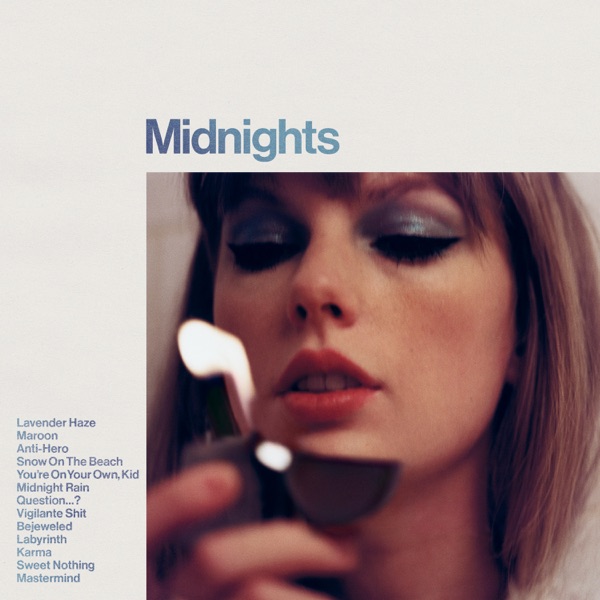

The app fetches real song data and assembles the bubble at runtime, all pulled from Apple Music the moment the AI decides to show it.

Designing in code

The React Native app embeds the design system rather than just referencing it. Theme tokens, a typed component library, visual testing through Storybook, and an AI backend powered by the Anthropic API that generates contextual responses. When you mention a song, the app inserts a music bubble with real Apple Music data. Ask for directions and it surfaces a map. The interface expands to meet the conversation and contracts when it passes.

A built-in viewer lets a designer step through every variant (horizontal bubble, vinyl, and anything else the system learns to render) without needing to run a conversation.

The component decisions happen at runtime, and the prototype is architected for legibility over scale. The app maintains a registry of bubble types. Each entry is self-contained (triggers, disambiguation, response format), so a designer can read the config and understand what the system will do:

MUSIC: {

type: 'music',

confidence: 0.7,

triggers: [

'send me a song',

'play a song',

'what should I listen to',

'recommend.*song',

],

exclude: [

'bird song',

'that song from the movie',

'song and dance',

],

schema: {

message: 'string',

musicQuery: 'string',

},

}When Claude's response matches the music format, the app fetches real song data, preloads the album art, extracts the artwork's color palette, and renders a bubble that looks and behaves like a native iMessage music share. The design decisions (what component to surface, what data to populate it with, when to expand the interface) are all made at runtime by the AI and the component system together.

A conversation about the Beastie Boys. The AI keeps pace, dropping a music bubble with real Apple Music data when it's relevant and clearing the stage when it's not.

This is what I described in my essay on Figma's changing role as "working software replacing the shared canvas." You can't judge whether a conversational system is working from a static frame. You have to be part of the conversation to see whether the rhythm works.

Going native

While the React Native app extended the Figma pipeline, I built a separate version in SwiftUI for a different reason: to learn Liquid Glass. This meant building the design system from scratch in Swift rather than deriving it from Figma, with its own primitives, semantic tokens, and theme modifiers written natively for the platform.

I built this with a version of Claude Code whose training data predated Liquid Glass by well over a year. It had no concept of the design language, and Apple's developer documentation (rendered entirely in JavaScript) returned empty pages when Claude Code tried to read it, so it kept falling back to the only thing it knew: old iOS blur patterns and UIVisualEffectView. I wrote a script to convert Apple's developer docs into markdown and fed them in manually, then built a rules system into the project's CLAUDE.md that taught it how Liquid Glass actually worked. I was learning the material system from the WWDC sessions and Human Interface Guidelines and teaching Claude Code at the same time.

The SwiftUI prototype in Xcode, with source on the left and a live simulator preview on the right. Running both at once made teaching Claude Code an unfamiliar design language workable.

At one point I gave it a code sample directly from a WWDC session. After struggling to make it compile, Claude convinced itself the API didn't exist, apologized for the hallucination, and offered to remove what it had written. I had to paste the Apple documentation back in to prove it was real. It was a useful reminder that working at the frontier means the tool's confidence model breaks down. It gets things wrong and then doubles down by trying to undo the correct answer.

One of the first things I wanted to get right was the message bubble gradient. In iMessage, sent messages aren't a flat blue. There's a continuous gradient that shifts across the conversation. Apple perfected this detail. It's not a design decision I made, but details like this are what separate a prototype that feels like a simulation from one that feels like the real thing in your hand. And it's exactly the kind of system-level polish that prototyping tools can't reproduce, no matter how much time you spend trying. In SwiftUI, this is achievable by passing a GeometryProxy from the parent view down to each bubble, rendering a screen-sized gradient, and masking it to the bubble's shape:

Rectangle()

.fill(ThemeColors.outgoingBubbleGradient)

.mask(alignment: .topLeading) {

RoundedRectangle(cornerRadius: ThemeDimensions.bubbleCornerRadius)

.frame(width: geometry.size.width, height: geometry.size.height)

.offset(x: rect.minX, y: rect.minY)

}

.offset(x: -rect.minX, y: -rect.minY)The gradient is defined as a two-stop diagonal from a light cyan to a deeper blue, and because every bubble samples the same screen-space gradient, the color shifts subtly from message to message. It's a small detail that separates "looks like iMessage" from "feels like iMessage."

This effect is effectively impossible in React Native, and equally so in Figma or any other prototyping tool. The gradient requires screen-coordinate rendering and arbitrary shape masking, capabilities that exist natively in SwiftUI but not in any abstraction layer above it. The React Native app uses flat #007aff. A Figma mockup uses a static fill. Both are approximations of something only the native platform can do.

What the gap reveals

The gradient is one example. Liquid Glass is the bigger one.

Apple describes Liquid Glass as a "digital meta-material that dynamically bends and shapes light." At WWDC, they were explicit that motion and visuals were designed as one from the foundation. The material refracts content behind it in real time, adapts its tint based on underlying content brightness, flips between light and dark variants for legibility, and responds to touch with interior illumination that spreads to nearby glass elements. Craig Federighi said Apple designers fabricated physical glass samples of various opacities in their industrial design studios to match interface properties to real glass. The material's feasibility, he noted, depends on Apple silicon's computational power.

Two system-level details in one frame. Liquid Glass on the bottom bar, and the continuous gradient flowing across every sent bubble.

Figma has an iOS 26 design kit that recreates the visual surface, not the behavior underneath it. That gap has always existed. But Liquid Glass is the first design language that can't be meaningfully represented outside the platform it runs on. You can screenshot glass. You can't screenshot refraction.

The deeper issue is that Liquid Glass's core design principles describe relationships between the interface and its environment. "Establish a clear visual hierarchy where controls elevate and distinguish the content beneath them." "Align with the concentric design of the hardware." These are spatial and contextual. They describe how something feels in your hand, not how it looks on a screen. A Figma mockup can show you the visual language. It can't express the relationships that define it.

Mobile design has always been an act of translation. You design for a 6-inch screen on a 27-inch monitor, approximate touch interactions with mouse clicks, and evaluate the experience through a tool that can't simulate the thing you're actually making. Designers accepted that distance because building on-device was too slow to think in. That friction has inverted. Figma ships a native glass effect with real refraction and depth controls. It's a genuine shader, not a hack. But it's still a snapshot of a material, not the material itself. Building a working SwiftUI view with .glassEffect() is now faster than designing one in Figma, and the result actually responds to what's behind it. The proxy became slower than what it was proxying.

Susan Kare arrived at this same principle in 1984. When she started designing icons for the original Macintosh, Andy Hertzfeld built her an editor so she could work directly on the Mac, not on paper. The best design tool was the platform itself.

The historical case for cross-platform frameworks was always pragmatism. Maintaining multiple codebases is expensive, slows feature development, and typically requires platform-specific developers on staff. AI changes every variable in that equation. Claude Code can now help you build SwiftUI as fluently as React components, even when the design language is so new you're teaching it yourself. Without the time-and-cost arguments, the abstraction layer just costs you the design language of the platform itself.

The plugin essay was about closing the gap between design and code. This one is about what only shows up when you build the running interface itself.

The vinyl bubble from earlier, only this one you can actually grab. Scrub the record, tap to play, switch tracks with the artwork.

The design system, the React Native prototype, and the plugin are all open source on GitHub and the Figma Community.

About the author

Pat Dugan is a designer and engineer who has spent the last decade and a half shipping consumer products, building design systems, and growing teams at Google, Meta, Quora, Nextdoor, and the Chan Zuckerberg Initiative. These days he’s mostly thinking about how AI changes the way we make things.

Read next

AllFigma's shift from hub to spoke

Figma is positioning itself further from the center of product development just as code-first workflows are making it optional. What that means for how teams build, collaborate, and think about the role of design tools.

·8 min readThe case for building your own Figma plugins

The most useful Figma features might be the ones you build yourself. Here's what I learned building a plugin that assembles AI-powered iMessage prototypes.

·4 min readTaste will not save you

Tasteful products lose all the time, and taste without discipline can make things actively worse. The case against taste as a moat.

·4 min read