When the artwork is the algorithm

When I needed cover art for a podcast, I wrote a Hamiltonian cycle generator instead of prompting an image model. Here's why craft still matters when the cheapest path has never been cheaper.

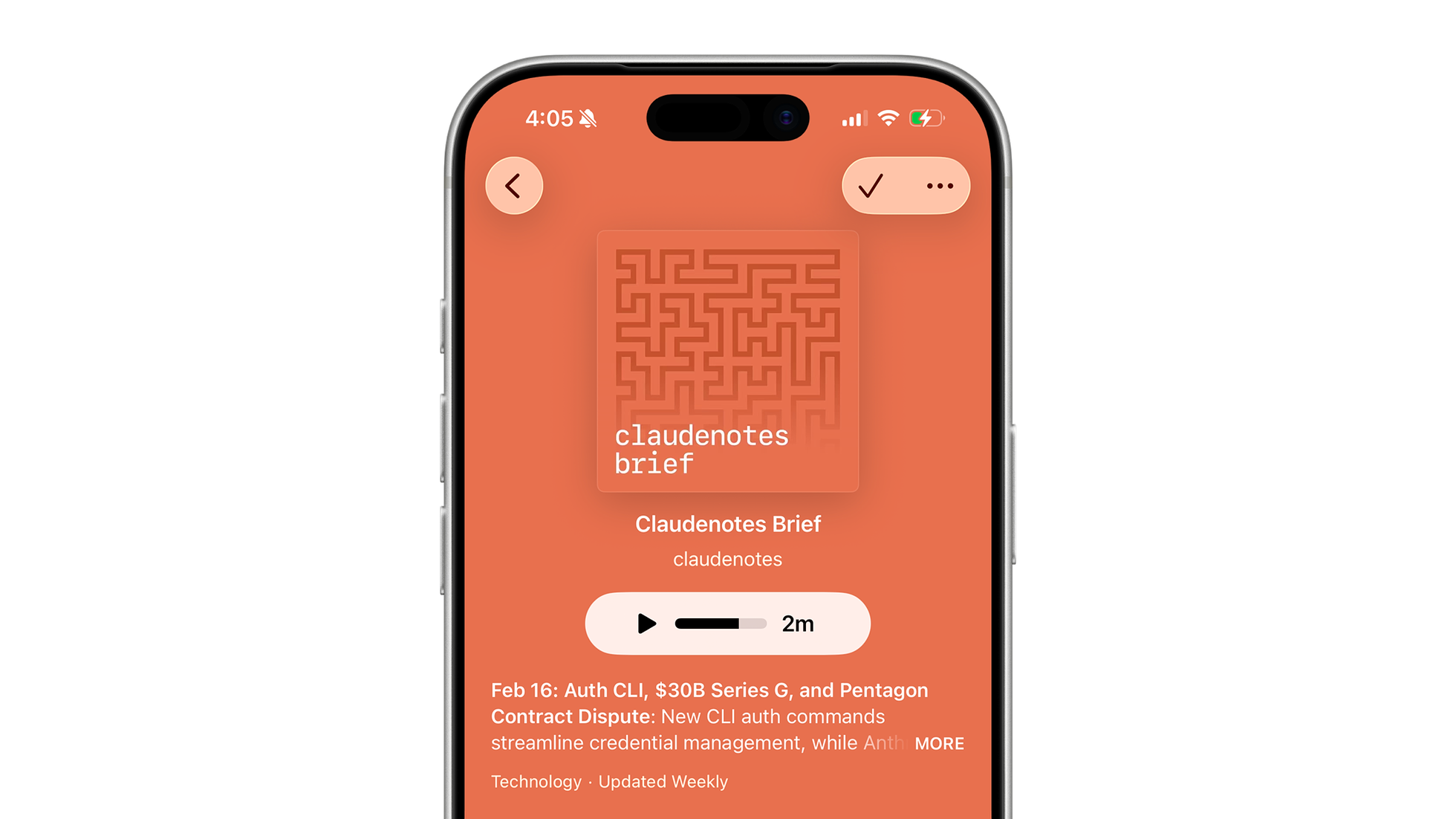

The claudenotes brief. A weekly podcast covering Claude Code updates and broader AI news, with cover art generated entirely by code.

When I needed cover art for a podcast I was preparing to launch, I wrote an algorithm instead of prompting an image generator. Doing so required making actual design decisions, which is why I can articulate and defend every one of them.

Why not just use AI?

That podcast, the claudenotes brief, covers Claude Code updates and broader AI news. It goes out every Monday alongside a newsletter. I launched it because the Anthropic team never seems to stop shipping, and I wanted a way to keep up without digging through release notes. Claude researches, authors, and publishes each issue. It even narrates the podcast. I designed the system, not the output. That's a defensible position for editorial curation. It's a harder one for visual identity.

For a solo side project, the temptation is to prompt an image generator. Type a few words, get something back, call it done. Bigger shows commission real designers for custom illustrations and bespoke typography, which is still the standard for anyone who takes visual identity seriously. I wanted that level of intentionality without the freelance budget.

Most AI-generated artwork feels soulless, and the process explains why. Prompting an image generator isn't designing. You're describing what you want and hoping the model gets close. There's no craft in the loop. No decisions being made at the level where design actually happens.

Art requires you to have something to say, and the craft to back it up. When you prompt an image generator, you're outsourcing both. The results read as nothing more than output without authorship.

Finding the pattern

So instead of prompting an image generator for the claudenotes artwork, I wrote code. I didn't land on meander patterns immediately. The exploration started with gradients, simple linear fades in the Anthropic brand palette. Safe, but generic. Then dot grids. Then U-shaped stripes and S-curves, each one getting closer to something with visual texture but still feeling generic.

The meander idea came from Anni Albers. Albers was a Bauhaus-trained textile artist, but the remarkable thing about her work wasn't the weaving itself. It was her relationship with the constraint of the loom. A loom is fundamentally a grid: warp and weft threads intersecting at right angles. Every weaver works within that structure. What Albers did was design threading plans (sequences of instructions the loom would execute) that injected freedom into the grid. Her meander patterns follow rigid rules at the cell level but produce results that feel organic, almost hand-drawn. Decades before anyone was talking about generative art, she was writing algorithms in thread.

It's the same idea at a much smaller scale. Simple rules and hard constraints can produce something that reads as intentional design rather than generative noise.

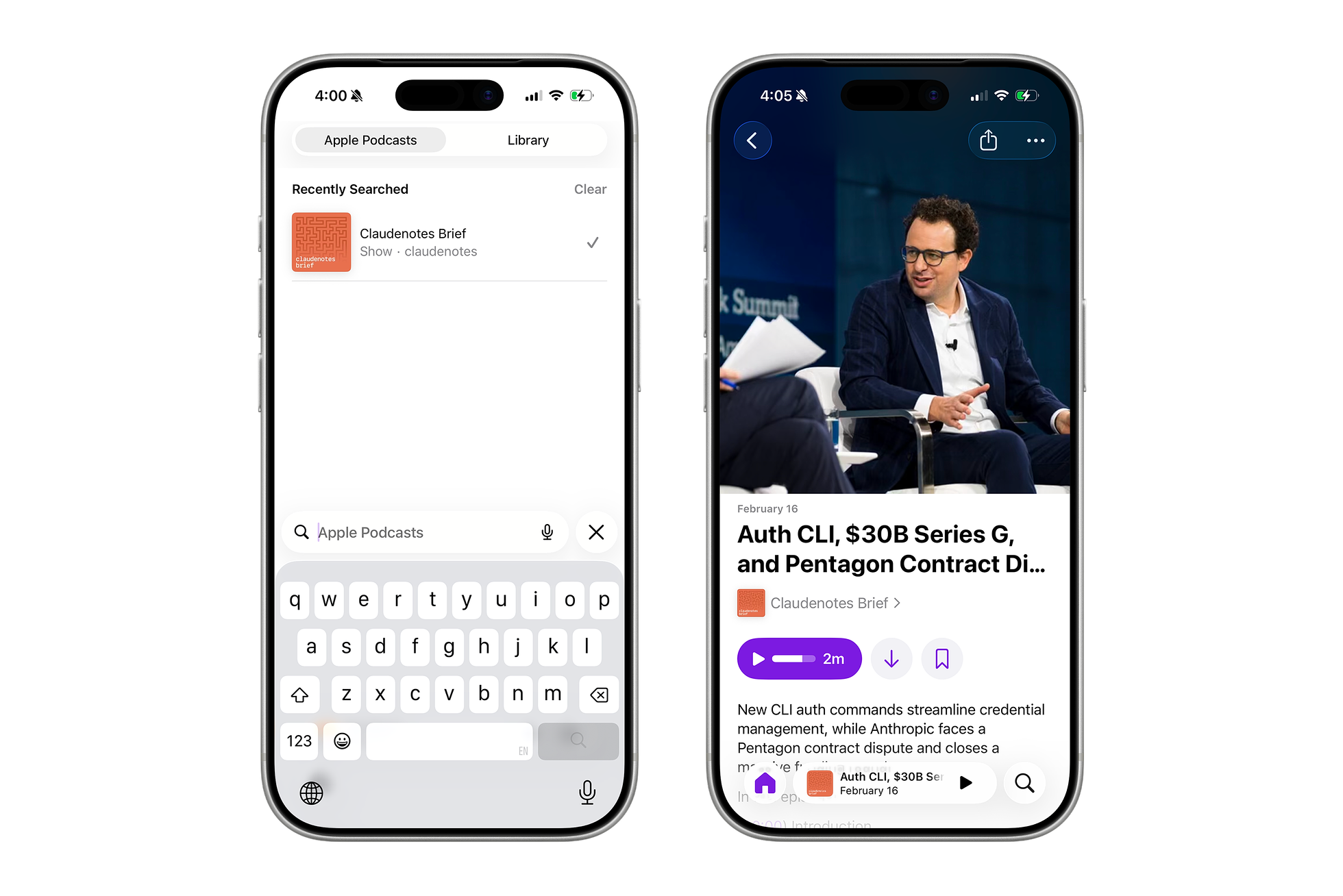

The artwork in context: search results (left) and an episode page (right) in Apple Podcasts. The meander pattern reads clearly even at small sizes.

How the algorithm works

The core of the system is a Hamiltonian cycle generator. It starts with a serpentine path on a grid, a simple back-and-forth pattern that visits every cell, then applies thousands of random mutations to reshape it into something more interesting.

for (let m = 0; m < numMutations; m++) {

const candidates = [];

for (let r = 0; r < cellRows; r++) {

for (let c = 0; c < cellCols; c++) {

if (grid[r][c] !== 1) continue;

const above = getCell(r - 1, c);

const below = getCell(r + 1, c);

const left = getCell(r, c - 1);

const right = getCell(r, c + 1);

if (above !== left && above === below && left === right) {

candidates.push([r, c]);

}

}

}

// ... pick a random candidate, break the cycle, find a fuse point

}The key insight is the mutation eligibility check: a cell can only be removed if its neighbors form a specific pattern above !== left && above === below && left === right. This ensures the cycle stays connected after each mutation. After 6,000–8,000 mutations driven by a mulberry32 PRNG, the rigid serpentine dissolves into something that looks hand-drawn.

The meander contour traces itself in a single continuous loop, then the wordmark and gradient fade in. Same algorithm, same seed, every time.

Everything is seeded. The same input always produces the same output, which means I can tweak a single parameter, regenerate, and see exactly what changed. It's version control for visual design.

Designing the system, not the output

There's no interpretation layer between me and the output, no model deciding what I probably meant. The CLI exposes every parameter that shaped the artwork as a flag I can set or change.

The generator scripts expose this through a CLI that treats visual parameters like any other configuration:

node generate-backgrounds.js --only 07 --seed 999 --line-weight 0.50

node generate-backgrounds.js --only 07 --grid 21 --layout fadeI ended up building three separate generators from the same shared meander.js core: one for square podcast covers, one for Apple Podcasts full-page showcase art, and one for ultra-wide hero banners. The core algorithm doesn't change. Only the dimensions and fade strategies adapt to each surface.

The palette tells a story too

The monochrome variants layer dark orange lines over an orange ground, producing a tone-on-tone effect that feels like debossed paper. The poster variants shift to terracotta on warm cream, which reads more like a mid-century design print. The underlying algorithm is exactly the same, but the palette changes what the artwork communicates. The gradient fade leaves clean space for the show title, with start and end points I tuned across iterations.

Each seed produces a unique pattern. Try it:

Same algorithm, different seed. Every pattern is unique, but the visual language stays consistent. .

People can tell

When OpenAI launched Sora, a former researcher called it an "infinite AI TikTok slop machine." He wasn't wrong. The novelty lasts about five minutes. You type a prompt, a video materializes, and you move on. There's nothing to return to, nothing that rewards a second look. The numbers bear that out: Sora lost 99% of its active users within 30 days of launch, and downloads fell 45% month over month after that. The novelty fades fast when there's no authorship underneath.

When Apple rebranded Apple TV in late 2025, they could have rendered the new logo in CGI. Instead, they cut the Apple logo and the "tv" letters from actual glass, mounted them in a studio, and filmed a crew member physically moving lights across the surface. The refraction of light through real material, something still extraordinarily difficult to simulate convincingly, gives the piece a richness that no synthetic workflow could replicate. They chose the harder path because the result is better, and because the audience can feel the difference even if they can't articulate it.

The same instinct shows up across production work. Severance season two contains roughly 3,500 visual effects shots designed to be invisible. Project Hail Mary used over 2,000 VFX shots with zero green screens, extending physical reality rather than replacing it. In both cases, success means the audience never notices the work at all. That takes more skill, not less.

These aren't nostalgia. The cheapest path has never been cheaper, and these companies could have taken it. They didn't, because shortcuts show up in the final work.

Why I'd do it again

None of this is anti-AI. Claude wrote the Hamiltonian cycle algorithm at the core of the generator, and worked through the edge cases in the mutation logic when my first approach broke. Every design decision was still mine to make.

A recent episode of the claudenotes brief. Claude also mixes and levels each one. Engineering in service of authorship, not a replacement for it.

The artwork can be regenerated at any scale or modified with a single flag, and every variant traces back to a parameter I set. That's what made the algorithm worth writing instead of prompting for an image. Authorship isn't just the output; it's the chain of decisions that produced it.

There will be better image generators next year. What there won't be is a better record of my thinking.

About the author

Pat Dugan is a designer and engineer who has spent the last decade and a half shipping consumer products, building design systems, and growing teams at Google, Meta, Quora, Nextdoor, and the Chan Zuckerberg Initiative. These days he’s mostly thinking about how AI changes the way we make things.

Read next

AllThe clay model

Automakers still sculpt cars in clay because no screen can replace what happens at full scale under real light. Software design is learning the same lesson.

·7 min readThe designer as manager

AI is dissolving the assumptions the IC track was built on. The designer's day now looks more like management than craft, and Julie Zhuo's maker-to-manager framework maps uncomfortably well.

·4 min readTaste will not save you

Tasteful products lose all the time, and taste without discipline can make things actively worse. The case against taste as a moat.

·4 min read