Building recommendations with nothing to sell

The reason most recommendations feel off isn't the algorithm. It's that the platform recommending content is also the platform selling it. I built a cross-platform alternative to test that theory.

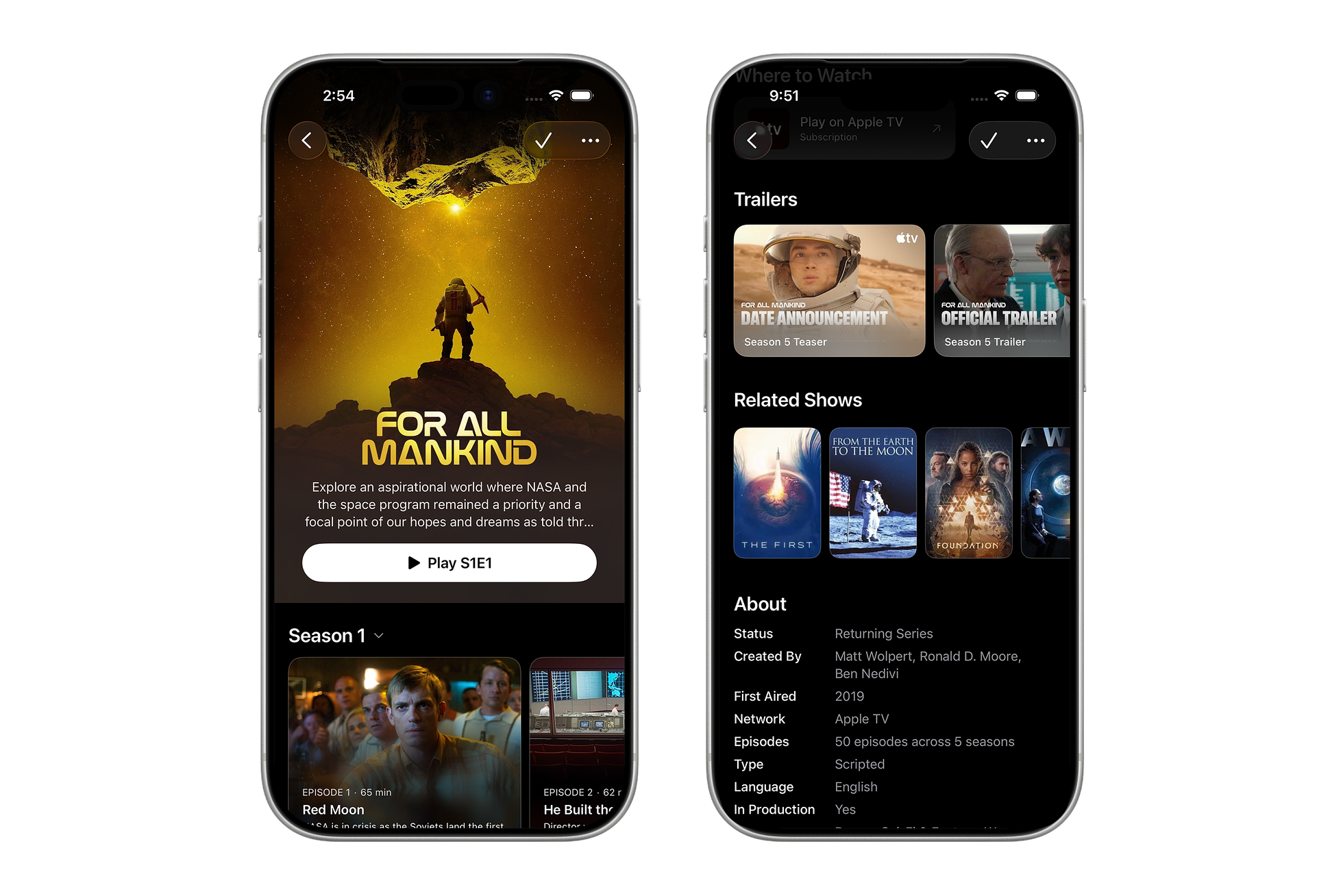

NextUp's related shows for For All Mankind. A shared taste profile the UI never surfaces holds them together.

Every recommendation algorithm serves someone. The question is whether it's you. Spotify lets labels pay for algorithmic placement. Netflix surfaces its own originals through a recommendation page the company's own researchers call the single biggest driver of engagement. YouTube's dislike button barely changes what gets recommended. The pattern repeats across every platform: the algorithm's first loyalty is to the business model, not the viewer.

It's a principal-agent problem. The platform recommending content is also the platform selling it, and users are smart enough to sense when platforms bias recommendations toward their own content to protect margins, a pattern studies confirm. Almost every returning English-language series on Netflix lost audience in 2025. The platform is growing, but the content it recommends isn't holding attention. These algorithms aren't broken. They're optimizing for the platforms' priorities, which happen to diverge from yours.

There's a meaningful difference between an algorithm trying to model your taste and one trying to move its own catalog. NextUp is a cross-platform TV tracker I built around that distinction: given a show you like, what else might you like, regardless of where it's streaming?

Describing what makes a show worth recommending in the first place was the hard part. The algorithm came later.

Related shows for Obi-Wan Kenobi. More Star Wars is probably what a fan wants; the engine agrees, but gets there through tone and structure rather than franchise loyalty.

The taxonomy problem

Recommendation quality starts with how well you describe what a show actually is. Companies like Netflix and Warner Bros. can invest in proprietary classification systems. Everyone else works with what's publicly available: databases like TMDB and TheTVDB, crowdsourced by volunteers who care enough about TV to catalog it for free. The data is generous and surprisingly comprehensive, but the taxonomy is shallow. TMDB assigns one or two genres per show. Both Silo and Euphoria are labeled "Drama." That's technically accurate and practically useless for recommendation.

Closing that gap has historically required serious investment. In 2007, Netflix VP of Product Todd Yellin started hiring aspiring screenwriters to tag every piece of content against a 36-page taxonomy covering roughly 200 data points: tone, violence levels, romance, plot conclusiveness, character morality, setting, era. By 2014, that tagging work had produced 76,897 unique micro-genres with names like "Cerebral French Art House Movies" and "Dark Suspenseful Gangster Dramas." It worked. Pandora did something similar with the Music Genome Project: 450 attributes per song, analyzed by trained musicologists spending 20-30 minutes per track.

Today, the kind of structured tagging that once required teams of screenwriters and musicologists can be done programmatically by AI, at a fraction of the cost.

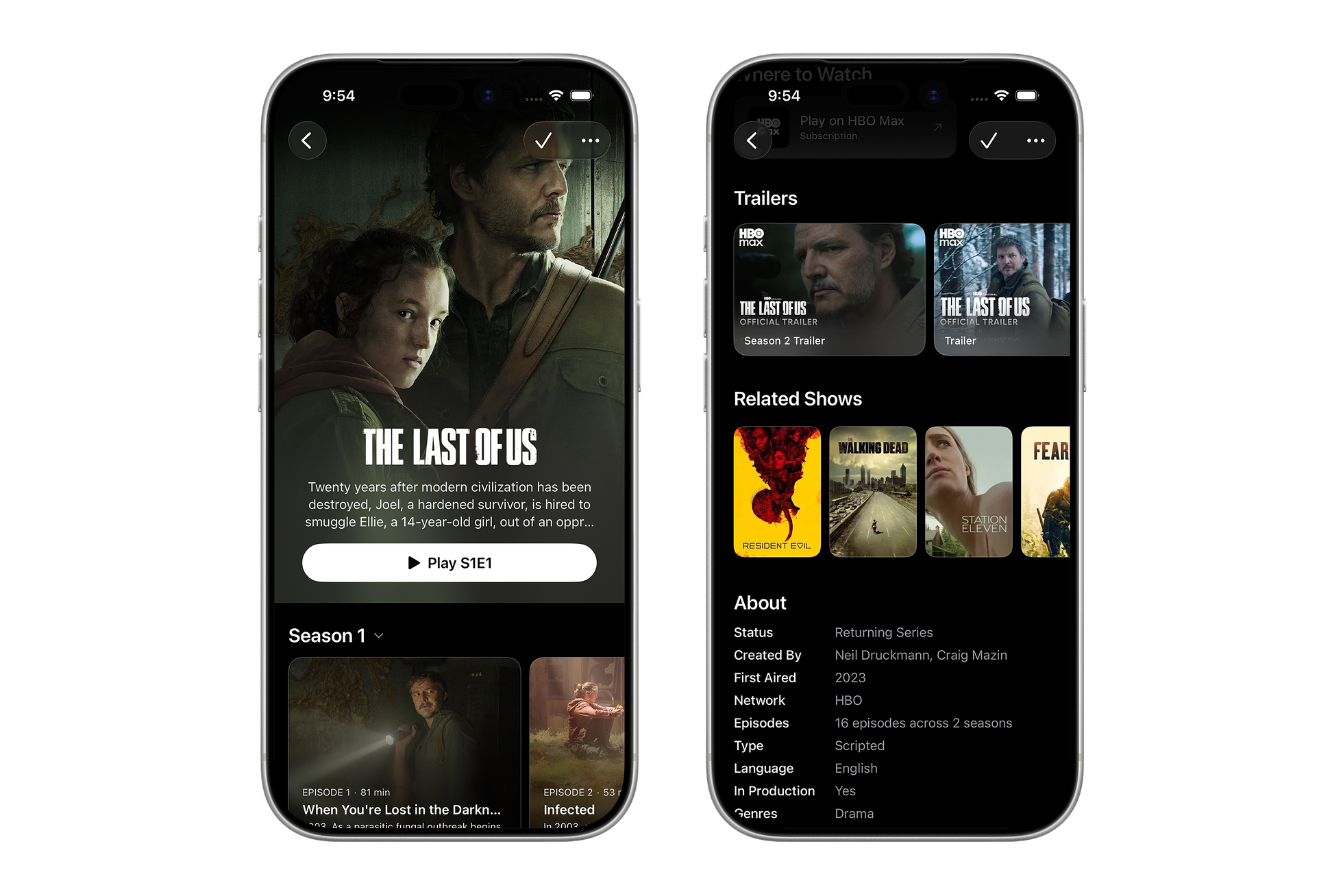

Two zombie shows show up for the obvious reason. Station Eleven shows up for the actual one: post-apocalyptic grief, slow-burn, no monsters required.

A taxonomy built for the viewer

I built an enrichment layer that generates a structured taxonomy for every show in NextUp's catalog. Rather than relying on a single genre label, each show gets tagged across eight dimensions covering tone, themes, narrative structure, audience profile, and more.

Take tone. Where most systems flag a show as "dark," the enrichment layer captures multiple dimensions of how the tone moves through a season. Severance might be tagged as suspenseful and philosophical, with a darkening emotional arc. The Leftovers shares that profile. A generic sci-fi show doesn't, even though the genre labels might overlap.

Themes work the same way. The taxonomy draws from a vocabulary of 139 terms organized across eight categories: family and relationships, moral and emotional, power dynamics, identity, and so on. A show like Silo picks up themes like survival, dystopia, and hierarchy-challenge. Euphoria picks up identity-exploration, self-destruction, and coming-of-age. TMDB calls them both "Drama." The enrichment layer captures why they appeal to completely different audiences.

The prompt includes the full taxonomy of valid values, three worked examples (Breaking Bad, Brooklyn Nine-Nine, The Expanse), a verification checklist, and retry logic for validation failures. The schema is versioned: when a dimension changes, every show gets re-enriched and the results are diffed against the previous version. I tested the enrichment across multiple models and iterated on the prompt and schema together, treating them as a single system rather than independent pieces.

Here's what that produces for For All Mankind. The system has no access to marketing copy or platform positioning. It doesn't know this is an alt-history Apple TV prestige drama. But what it can tell you is that the series is a slow-burn, character-focused space opera about sacrifice and duty, with transformative emotional arcs.

{

"toneProfile": {

"primary": "dramatic",

"secondary": "inspirational",

"overall": "serious",

"emotionalArc": "transformative"

},

"contentDNA": {

"focus": "character",

"narrative": "serialized",

"pacing": "slow-burn"

},

"themes": [

"political-intrigue",

"sacrifice",

"duty-vs-desire",

"perseverance",

"hope-in-despair"

],

"audience": {

"demographic": "adult",

"maturity": "mature",

"skew": "balanced",

"culturalContext": "american"

},

"relationshipFocus": "ensemble",

"subgenre": "space-opera"

}Each show also gets a 1024-dimensional vector embedding from Voyage AI, generated from the concatenated enrichment text. These embeddings capture semantic similarity that the structured fields miss. Shows that don't share exact theme labels but occupy similar conceptual space still score well against each other.

The enrichment and embeddings feed into a scoring engine alongside more conventional signals: genre and tag data from TheTVDB, keywords, network and creator information. Each candidate show gets scored across all of these dimensions, producing a ranked list with structured match reasons that explain why each show was recommended, not just that it was.

When the scoring engine runs For All Mankind through the full pipeline, the results converge on shared structural traits rather than on the show's platform or franchise. The top three span Hulu, HBO, and Apple TV, connected by shared tone, themes, and narrative structure rather than by which service happens to carry them.

[

{

"rank": 1,

"name": "The First",

"score": 0.725,

"topReasons": [

"dramatic tone",

"shared themes: sacrifice, perseverance",

"space-opera",

"character-focused",

"serialized"

]

},

{

"rank": 2,

"name": "From the Earth to the Moon",

"score": 0.715,

"topReasons": [

"dramatic tone",

"shared themes: sacrifice, duty-vs-desire, perseverance",

"character-focused",

"ensemble"

]

},

{

"rank": 3,

"name": "Foundation",

"score": 0.71,

"topReasons": [

"dramatic tone",

"space-opera",

"character-focused",

"serialized",

"slow-burn pacing"

]

}

]Proving it works

A recommendation engine with dozens of weighted signals is easy to break and hard to evaluate by feel. Before tuning anything, I built an evaluation pipeline. The test dataset started with 80 shows where I'd manually curated the expected recommendations with Claude, not a ground truth the algorithm needed to match exactly, but a consistent reference point for measuring whether changes made things better or worse. Every proposed weight change runs as a formal experiment against industry-standard metrics (Precision@K, nDCG, Mean Reciprocal Rank), with paired t-tests for statistical significance. The decision to ship or abandon comes from the numbers.

The initial algorithm produced zero useful recommendations for a class of high-concept shows like Severance, The Leftovers, and Shrinking. These shows had sparse metadata in the public databases, so the tag-heavy scoring dimensions had nothing to work with. The pipeline caught it. The fix was targeted: an adaptive weight profile that shifts enrichment-heavy for shows where standard metadata is thin, without touching the weights for everything else. The metrics confirmed it worked. Without the evaluation infrastructure, I would have been guessing.

Over four baseline versions and 27 experiments, Precision@10 rose from 20.5% to 37.1%. The wins tracked the gaps each new signal was designed to fill. Mare of Easttown and Downton Abbey each jumped 40 percentage points when embeddings arrived, capturing the kind of tonal similarity that no tag vocabulary could express. A later round of keyword tuning delivered its own gains. Structured metadata still pulls its weight even alongside embeddings. The experiments that didn't move the metrics were abandoned just as quickly.

Precision@10 across four shipped baselines. The v0.2 → v0.3 jump is the moment embeddings entered the scoring engine. The single biggest win in 27 experiments.

The whole pipeline took less than a day to build. A side project can now run the same evaluation methodology Netflix and Spotify use. I set up weight sweeps across embedding, keyword, and enrichment signals to run overnight. By morning, the data was clear enough to act on.

What the tools don't solve

The technical barriers to building a good recommendation engine have largely collapsed. A structured taxonomy that would have required a team of screenwriters and musicologists can be generated programmatically. An evaluation pipeline that would have been a quarter-long infrastructure project took an afternoon. The tools are genuinely accessible now.

But accessibility doesn't create incentive. The platforms with the largest audiences and the most viewing data have no reason to recommend content that sends users to a competitor. The platforms best positioned to build cross-platform recommendation have business models that make it irrational. That conflict isn't a technical problem and it won't be solved by better algorithms.

NextUp is a side project. It doesn't have behavioral data from millions of users or content deals that shape what gets surfaced. The only thing the algorithm is optimizing for is whether you'd actually like the show. Whether that's enough to earn trust against platforms people already use is the harder question.

About the author

Pat Dugan is a designer and engineer who has spent the last decade and a half shipping consumer products, building design systems, and growing teams at Google, Meta, Quora, Nextdoor, and the Chan Zuckerberg Initiative. These days he’s mostly thinking about how AI changes the way we make things.

Read next

AllYour data is yours

Every platform I depended on eventually shifted its priorities away from mine, so I built my own infrastructure for publishing, personal data, and the privacy controls they never offered.

·7 min readThe clay model

Automakers still sculpt cars in clay because no screen can replace what happens at full scale under real light. Software design is learning the same lesson.

·7 min readThe designer as manager

AI is dissolving the assumptions the IC track was built on. The designer's day now looks more like management than craft, and Julie Zhuo's maker-to-manager framework maps uncomfortably well.

·4 min read