A modern approach to testing Swift

How I built a multi-tiered testing system for an iOS app. Not for the coverage metric, but to give an AI coding agent the confidence to make sweeping changes without breaking things.

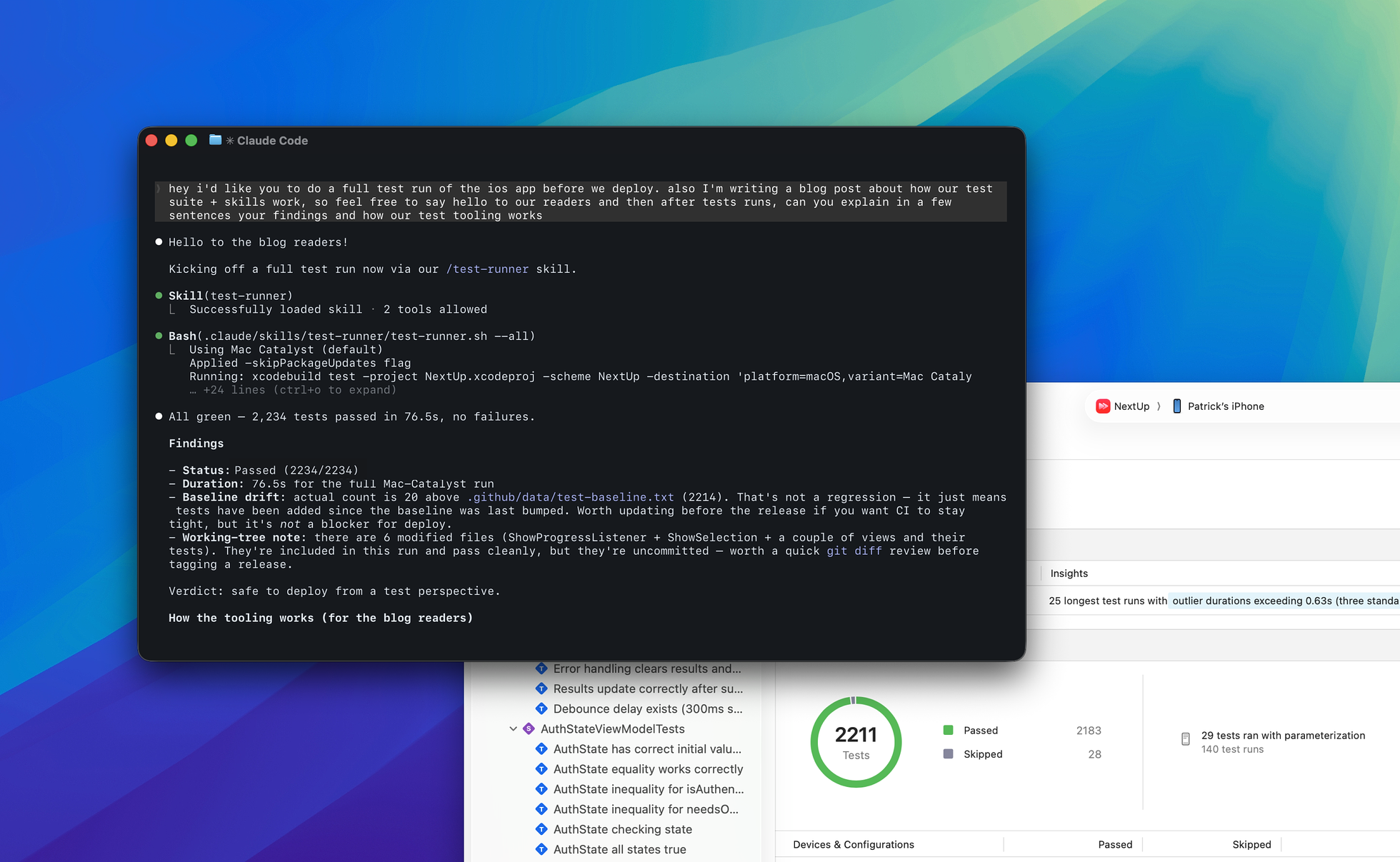

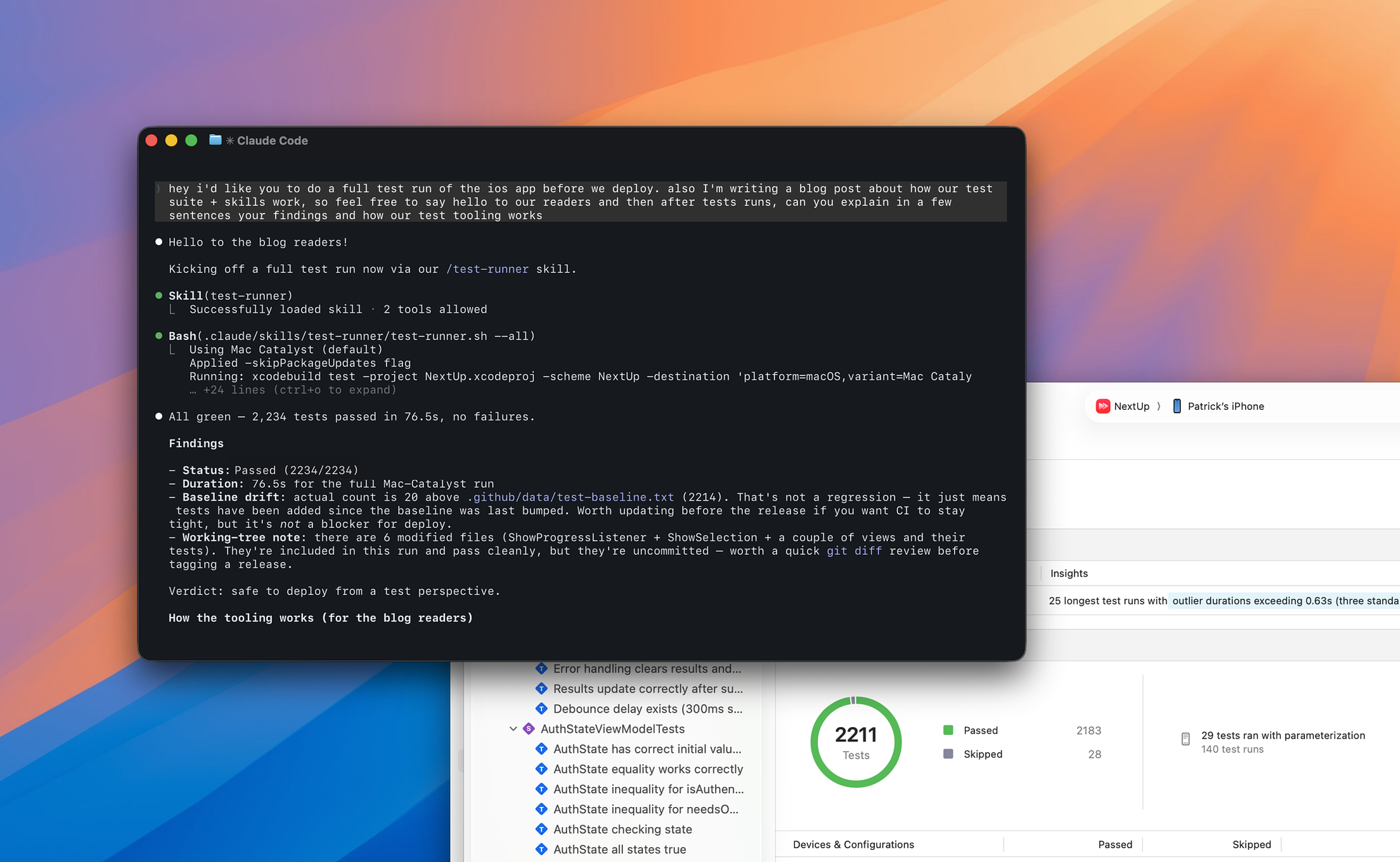

The NextUp test suite in Xcode. The safety net that lets Claude rewrite whole subsystems while the app keeps working.

I went from giving Claude well-defined and moderately scoped tasks to telling it to refactor entire subsystems. The goal was to build foundational infrastructure that makes the wrong thing hard to do, not to meet a test coverage metric or keep adding more tests.

Earlier this year I started building NextUp, an iOS app for tracking TV shows and sharing recommendations with friends. I had used agentic coding for prototypes and scoped features before. NextUp was the first time I used it to stand up a whole app, and the experience doubled as training in how to do this at scale. As my confidence increased, so did the scope of what I asked Claude to do. I started with small, single-file refactors, then quickly moved to larger features that cut across the iOS client and the backend. But the more freedom I gave it, the more I needed guardrails that let me focus on design and architecture instead of line-by-line review.

That meant building a real testing system. Not just bolting on unit tests, but building infrastructure that keeps tests consistent, eliminates duplication, and scales alongside the codebase instead of dragging behind it.

The shape of the problem

Under the hood, the NextUp client uses SwiftUI with SwiftData for local persistence, Firestore for cross-device sync, and Factory for dependency injection. It follows MVVM with a repository pattern. All data mutations go through repositories that handle the local-first write, then sync to Firestore in the background.

A single user action like following a show touches a ViewModel, a repository, SwiftData, a pending write queue, and eventually a Firestore listener. Testing any of that in isolation is useful. Testing it end-to-end is essential.

Swift Testing as the foundation

The testing suite shook out into three tiers: unit tests for isolated logic, integration tests that wire up real repositories against in-memory SwiftData containers, and emulator-backed tests that run against a local Firestore instance. Today there are over 2,000 tests across about 165 test files, and the whole suite runs identically on my machine and in CI.

I built everything on Swift Testing rather than XCTest. The @Test macro and #expect assertions are cleaner, but the real wins are structural. @Suite lets you organize tests into composable groups with shared traits. Tags let you slice the suite by concern: .unit, .integration, .service, .viewModel, .firestore. You can run exactly the subset you need.

Here's what a typical integration test for NextUp looks like:

@Suite(.tags(.integration, .slow), .container)

@MainActor

final class FollowShowFlowIntegrationTests {

init() async throws {

testEnv = TestEnvironment.makeWithSharedContainer(SharedTestContainer.shared)

try testEnv.clearAllData()

testEnv.reset()

showRepository = testEnv.makeShowRepository()

_ = try testEnv.createTestUser(id: TestConstants.defaultUserId)

}

@Test("Follow show creates local record and triggers Firestore sync")

func followShowCreatesLocalAndSyncs() async throws {

let mockShow = MockDataBuilder.makeShow(

id: TestConstants.defaultShowId,

name: TestConstants.defaultShowName

)

testEnv.tmdbService.addMockShow(mockShow)

testEnv.authService.mockUserId = TestConstants.defaultUserId

_ = try await showRepository.followShow(mockShow)

let descriptor = FetchDescriptor<FollowedShow>(

predicate: #Predicate { $0.showId == showId }

)

let followedShows = try testEnv.modelContext.fetch(descriptor)

#expect(followedShows.count == 1)

}

}The .container trait resets Factory's DI container between test suites, so each suite gets a clean dependency state. The TestEnvironment object wires up mock services, an in-memory SwiftData container, and helper methods for creating test data. Every test suite that touches SwiftData is annotated @MainActor, a requirement I enforce with a custom SwiftLint rule.

Standardized test data

One of the earliest problems I hit was test data sprawl. Without constraints, Claude treated every test file as a blank slate, picking shows at random, inventing user IDs on the fly, never reusing anything. You'd end up with "user_123" in one file, "test_user" in another, The Sopranos in one suite and Breaking Bad in the next. When a model changed, everything broke in different ways.

The fix was making randomness structurally impossible. One file, TestConstants, defines every piece of shared test data: user IDs, show IDs, episode tuples, genre codes, provider IDs, even async delay durations. Lint rules enforce that tests reference it instead of inventing their own.

Inline test data is great when a human is writing and maintaining a handful of test files. It's a liability when an agent is generating tests across hundreds of files and every inconsistency compounds into maintenance debt.

MockDataBuilder provides factory methods for every model in the app, with sensible defaults that reference TestConstants:

let show = MockDataBuilder.makeShow(

id: TestConstants.breakingBadShowId,

name: TestConstants.breakingBadName

)For complex scenarios like a user with multiple followed shows, partially watched episodes, and continue watching state, there's a TestScenarioBuilder with a fluent API:

let result = try testEnv.scenario()

.withShow(id: TestConstants.breakingBadShowId, episodeCount: 10)

.withWatchedThrough(

showId: TestConstants.breakingBadShowId,

throughSeason: 1,

throughEpisode: 5

)

.withContinueWatching(

showId: TestConstants.breakingBadShowId,

episodesBehind: 5

)

.build()The line between constants and inline values is intentional: identity data (IDs, names) is centralized, scenario logic (season 1, episode 5) stays in the test where it's readable.

What used to take ten or more lines of manual entity creation collapses to three. More importantly, the data is consistent. Every test that needs "a user watching a show" uses the same constants and the same builders.

Lint rules as guardrails

Conventions are only as good as their enforcement. Claude won't check a style guide before writing a test, so I wrote nearly 500 lines of custom SwiftLint rules that make violations impossible to commit. A few examples:

FollowedShow(userId: ...)useMockDataBuilder.makeFollowedShow()userId: "test_user_123"useTestConstants.defaultUserIdshowId: 123useTestConstants.commonTestShowId@Suite without .tags()use@Suite(.tags(.unit, .service))@Suite(.tags(.firestore))useAdd .requiresFirestoreEmulator()These rules cover every SwiftData model, every constant type (show names, episode numbers, status strings, email addresses, display names), and every suite annotation.

Claude either follows the conventions or the commit gets rejected. There's nowhere for debt to accumulate.

The Firestore emulator layer

Integration tests that touch Firestore run against a local emulator. The FirestoreEmulatorHelper handles configuration, health checks with exponential backoff, and data cleanup between tests. A custom Swift Testing trait, .requiresFirestoreEmulator(), checks whether the emulator is running and skips the suite gracefully if it isn't:

extension Trait where Self == ConditionTrait {

static func requiresFirestoreEmulator() -> Self {

.enabled("Firestore emulator not running") {

await FirestoreEmulatorHelper.shared.isEmulatorRunning()

}

}

}This means the full test suite always passes, even on machines without the emulator. Emulator tests are opt-in via an environment variable (NEXTUP_ENABLE_EMULATOR_TESTS=true), set automatically by the test runner when you pass the --emulator flag.

Building a test runner for agents

Apple's Swift Testing framework is well-designed, but it shipped with limited command-line tooling for running tests selectively on a real Xcode project. You can't easily say "run just the integration tests" or "re-run only the failures" from the terminal. For an AI agent working in a command-line environment, that's a dealbreaker.

The fix was a test runner, built as a Claude Code skill, a shell script that wraps xcodebuild with the correct Mac Catalyst destination and exposes the kind of interface an agent actually needs:

# Run everything

./run-tests.sh --all

# Target by scope

./run-tests.sh --suite ShowRepositoryTests

./run-tests.sh --test ShowRepositoryTests/testFollowShow

# Target by architectural layer

./run-tests.sh --tag service

./run-tests.sh --tag viewModel

./run-tests.sh --tag integration

# Re-run only previous failures

./run-tests.sh --failed

# Spin up a local Firestore emulator

./run-tests.sh --emulator

# Generate coverage reports

./run-tests.sh --coverageThe tag system works by mapping each tag to a directory in the test target. --tag service discovers the test files in the services folder and converts them to the -only-testing: flags xcodebuild expects.

It's just a find command and a loop, but it closes the gap between Swift Testing's tag annotations and xcodebuild's filtering, which otherwise don't meet at the command line.

When tests fail, the runner parses the Swift Testing output for the ✘ failure markers, extracts the suite and test names, and stores them in a temp file. Running --failed picks up that file and re-runs only those tests. The whole cycle (run, fail, fix, re-run failures) happens without the agent ever needing to read raw xcodebuild output or construct xcodebuild flags manually.

A Python parser sits on top of the runner and generates structured JSON with the test count, pass/fail status, individual failure details, and a comparison against a baseline number stored in .github/test-baseline.txt. If the actual test count drops below the baseline (say 1,200 tests ran instead of 2,105), the parser flags it as a potential silent crash rather than a passing run.

This catches a subtle failure mode where a simulator or container issue causes half the test suites to silently skip, and the remaining tests all pass. Without baseline tracking, that looks like a clean run.

{

"status": "failed",

"testsRun": 2105,

"baseline": 2105,

"failures": [

{

"suite": "ShowRepositoryTests",

"test": "testFollowShow",

"file": "NextUpTests/UnitTests/Repositories/ShowRepositoryTests.swift",

"line": 42,

"message": "#expect(mockFirestore.followShowCalls.count == 1)"

}

],

"nextSteps": [

"Review failures at ShowRepositoryTests.swift:42",

"Check MockFirestoreService configuration",

"Re-run single test: test-runner --test ShowRepositoryTests/testFollowShow"

]

}The agent doesn't need to parse xcodebuild output or guess what went wrong. It gets the file, the line number, the parser's hint about what failed, and the exact command to re-run just that test after fixing it.

What changed

Once the infrastructure was running, the dynamic shifted. I stopped reviewing Claude's code line by line and started reviewing test results instead.

Before this system, I'd scope tasks tightly and serve as the integration layer myself. Now Claude can refactor an entire subsystem with the tests as the safety net. Recent examples in my codebase include migrating ViewModels from ObservableObject to @Observable and restructuring NextUp's listener architecture. The tests give both of us confidence the changes are safe.

The consistency improvements were just as significant. The lint rules and centralized test data eliminated an entire category of problems: tests that pass in isolation but use subtly different assumptions about the shape of test data. When every test in the suite shares its identity data and assembly logic, redundancy drops.

Observation before automation

It's tempting to say I should have built all of this from day one. I don't think I could have. Every piece of the testing system was a response to a specific failure I had to live through first. TestConstants came after Claude scattered user IDs across a hundred test files. The @MainActor lint rule came after the twelfth time the same SwiftData threading crash bit me, in a different test file each time.

What this kind of infrastructure buys you is trust. Earlier Claude models couldn't be trusted to follow project conventions on their own; the lint rules and tests are what made it safe to give them bigger and bigger jobs anyway.

About the author

Pat Dugan is a designer and engineer who has spent the last decade and a half shipping consumer products, building design systems, and growing teams at Google, Meta, Quora, Nextdoor, and the Chan Zuckerberg Initiative. These days he’s mostly thinking about how AI changes the way we make things.

Read next

AllEarning the interruption

Inside NextUp's notification system: AI-enhanced notifications, granular user control, and a personal TV guide built for the streaming era.

·4 min readThe clay model

Automakers still sculpt cars in clay because no screen can replace what happens at full scale under real light. Software design is learning the same lesson.

·7 min readThe designer as manager

AI is dissolving the assumptions the IC track was built on. The designer's day now looks more like management than craft, and Julie Zhuo's maker-to-manager framework maps uncomfortably well.

·4 min read